Advertisement

Build Your RIA Your Way with Betterment Advisor Solutions

Betterment Advisor Solutions is the all-in-one custodial platform purpose-built for independent RIAs. Get the technology and support to serve more clients, more efficiently, across investing, retirement, and cash.

“I’m investing in AI stocks” used to be a thing you could say and everyone would know exactly what you meant. You’re buying the Mag 7 minus Apple, adding in Broadcom, Oracle, Dell and IBM. Basically you had the whole trade right there. You could do some electrical utilities or a few optical networking stocks on the side if you wanted to, but it was unnecessary. All you needed to express the theme was an exaggerated version of the Nasdaq with an even bigger overweight to the MSFTs and the GOOGLs and the NVDAs and you were all set.

I think it’s not going to be so simple in 2026. We are starting to get a sense that there’s a Coke and Pepsi now. Both could win, but being “right” on AI might involve making a choice. I’m going to leave the Amazon AWS / Claude / Anthropic team out of today’s discussion for the sake of clarity but, yes, I know that on the metric of enterprise / corporate customers the Anthropic world represents the largest AI project in the world.

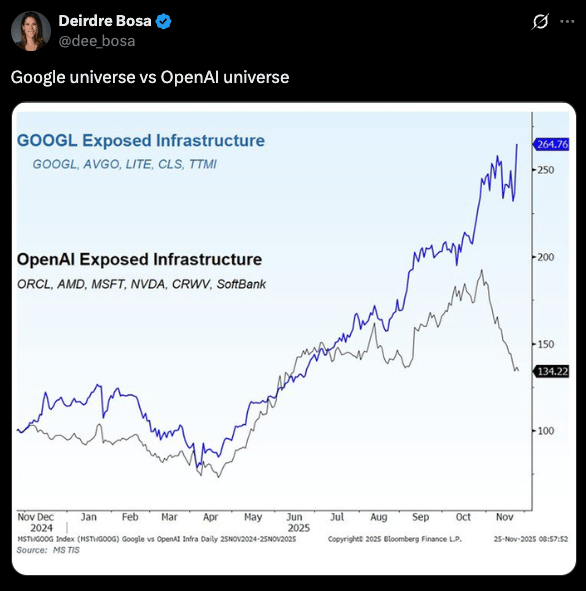

The more interesting story at the moment - and perhaps the most consequential - is the dichotomy between the Alphabet / Google Cloud AI universe and the OpenAI / Oracle / Microsoft universe. This chart Deirdre Bosa tweeted was making the rounds this week (I don’t know where it’s from otherwise I would have grabbed the original and credited it).

The upshot is that over the last few weeks investors began choosing sides and making a more pronounced wager on one or the other version of a massive AI platform. And the bets seem to be disproportionately on Alphabet’s vertically integrated version vs OpenAI’s sprawling taxonomy of partnerships, products and projects with third parties.

This is now a different phase of the AI bull market, where investors are more discerning and drawing distinctions between the rising and falling of the different ecosystems. Going long the Mag 7 + Broadcom or AMD is no longer the no-brainer it had been since the launch of ChatGPT in the fall of 2022. Now we got ourselves a horse race.

Google vs OpenAI

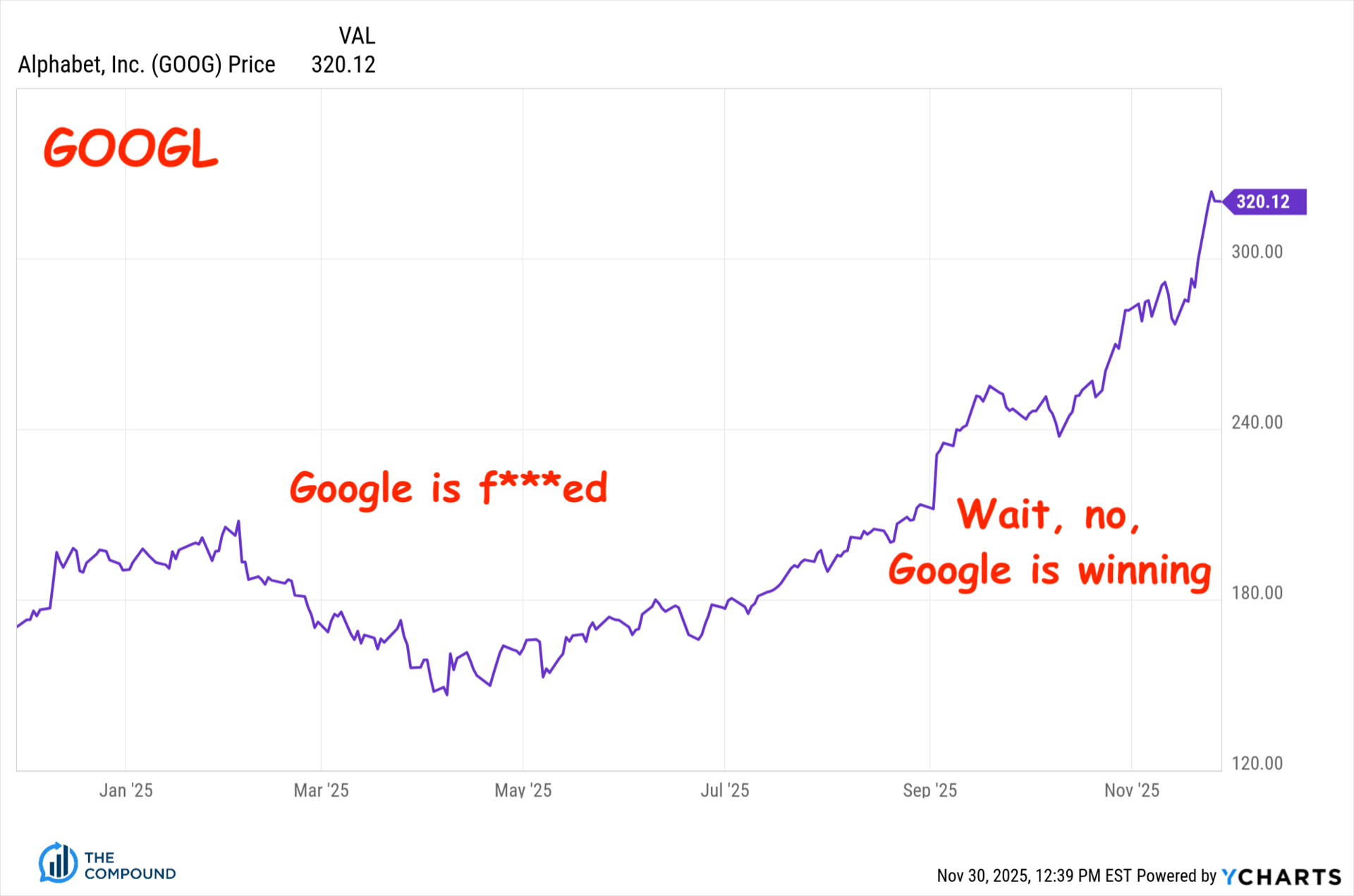

I made this for you

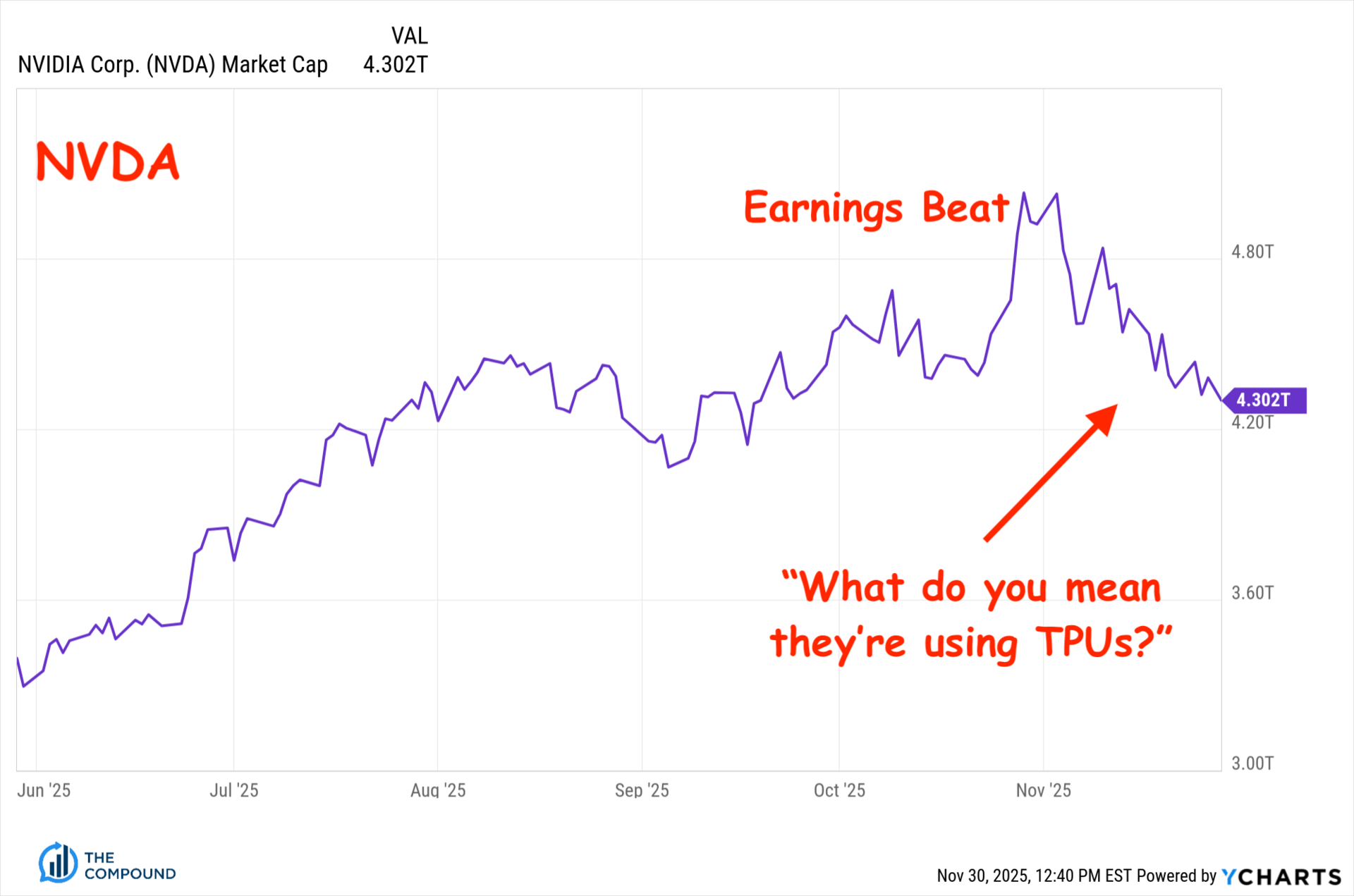

One of the main differentiators between the OpenAI approach and what Alphabet’s Google Cloud unit is doing is the new buzzword du jour (same as the old buzzword du jour): Efficiency. Alphabet has revealed its growing self-reliance on the homemade Tensor Processing Units or TPUs it makes itself as opposed to being entirely beholden to higher-priced GPUs from Nvidia. Now that everyone on Wall Street is back to being enamored with efficiency as opposed to outrageously bold capex pronouncements, the Alphabet story is the better one.

Alphabet’s been making its own TPU application specific integrated circuits or ASICs for a decade now, so this isn’t new. What is new is how much mileage they’re getting out of these chips. During the reveal of Gemini 3 over the last week, Google went out of its way to point out that the new model runs on TPUs. Investors went wild for this news because it looks, sounds and feels like a massive potential advantage for the company. It casts the OpenAI strategy of enormous capex spends with outside partners in a less desirable light.

not the Uno Reverse!

Google is flexing now just at the moment where Valley-wide doubt about Sam Altman’s plan has spilled over from the hills of San Francisco into the canyons of Wall Street. Narrative shift, not game over, but still. You can feel it. It’s palpable. If OpenAI had a publicly traded stock, it’s chart would probably not look much like Alphabet’s. What a difference a few months can make in this game. This summer, Google looked like it was falling behind - and perhaps it was. Now the shoe is on the other foot. Google rose to the challenge, investors have recognized it and now they’re flipping their bets.

A word on TPUs

Google has turned the latest version of its TPU chip, named Ironwood, into an investor storyline, not just a technical aspect of its cloud business. By running massive clusters of these chips, the company has created what is being referred to as an AI bullet train for users of its models.

Here’s a picture the company shared of 9,000 of these Ironwood TPUs working together to power the company’s advantage:

AI Hypercomputer

Here’s what Google told the world last week about the importance of their homegrown chips at their own Keyword blog:

As the industry’s focus shifts from training frontier models to powering useful, responsive interactions with them, Ironwood provides the essential hardware. It’s custom built for high-volume, low-latency AI inference and model serving. It offers more than 4X better performance per chip for both training and inference workloads compared to our last generation, making Ironwood our most powerful and energy-efficient custom silicon to date…

TPUs are a key component of AI Hypercomputer, our integrated supercomputing system designed to boost system-level performance and efficiency across compute, networking, storage and software. At its core, the system groups individual TPUs into interconnected units called pods. With Ironwood, we can scale up to 9,216 chips in a superpod. These chips are linked via a breakthrough Inter-Chip Interconnect (ICI) network operating at 9.6 Tb/s

Ironwood is the result of a continuous loop at Google where researchers influence hardware design, and hardware accelerates research. While competitors rely on external vendors, when Google DeepMind needs a specific architectural advancement for a model like Gemini, they collaborate directly with their TPU engineer counterparts. As a result, our models are trained on the newest TPU generations, often seeing significant speedups over previous hardware.

When reports began to hit the tape about Meta being in talks to utilize Goole’s TPU chips in its own data centers, something that hadn’t been done before, Alphabet’s shareholders were sent into ecstasy while Nvidia sank.

uh oh

Meta’s data center strategy has largely hinged upon its expensive accumulation of cutting-edge GPUs from Nvidia - the more the better at almost any cost - and this news was the first inkling that there might be some rethinking amongst the top spenders on AI infrastructure.

Nvidia’s CEO, Jensen Huang, issued a tersely worded statement about how happy he is for Google (he’s definitely f***ing not) along with a sneak diss about how ASICs will never be an adequate replacement for the power of GPUs. The stock market isn’t sure about this. If you were pricing in 70% profit margins for Nvidia as far as the eye can see, you may have been knocked off balance by this recent development. It’s true that Nvidia’s software layer, CUDA, is what keeps the entire ecosystem in the palm of Jensen’s hand. Shareholders are now going to be relying heavily on that software’s continued ubiquity and dominance among developers, deployers and distributors of AI services.

Barron’s tackled the Nvidia GPU vs Google TPU story heading into the weekend, sharing a snippet below…

Nvidia’s chips are still called graphics processing units though their use has gone well beyond driving personal computer monitors. GPUs are very good at splitting tasks into many pieces and running them side-by-side, which is how gamers can play at 120 frames per second. This is also what makes them so useful for AI calculation, as well as other high-performance domains.

TPUs, on the other hand, do one thing—matrix math for deep learning—but they do it very well. Under the right circumstances they can provide a much better cost structure than Nvidia GPUs. Deep learning has been the main thrust of AI research for over a decade now, leading us to the large language models that are powering new AI applications like chatbots and coding assistants. GPUs can do a lot more, but for many current AI loads, TPU is like a bullet train. It only goes from one place to another, but if that’s all you want to do, it’s very fast.

This isn’t a technical blog or an AI blog - I write about the markets. You’re welcome to go deeper into all the related rabbit holes on TPUs, GPUs, data center architecture etc. I just wanted to bring the story to you from an investing perspective because this was the week where the AI theme became less of an industry catch-all tailwind and more of a cage match. You may be forced to take a side as you allocate. You should at least be aware of the sides you’re choosing between.

Peter Boockvar on TCAF

let me explain something to you

We talked about this story and lots of other critical stuff on the latest episode of The Compound and Friends starring our friend Peter Boockvar. We didn’t want to leave you guys without an episode for the holiday weekend. We’re lucky to have brilliant people like Peter in our world who can show up and just absolutely cook on everything from inflation to earnings, AI to gold and oil. This episode was a slap.

Peter crushed it

All the links you need to watch and listen are below.

Thanks for reading, hope you’re having a great weekend, talk soon! - JB